This blog post picks up my earlier exploration of Full Spectrum “Inclusive” Credentials. In this post, I’m going to explore how you can structure different types of badges for different recognition purposes and demonstrate the fitness of a badge for its purpose by including what I like to call a “badge content manifest”, otherwise known as a Critical Information Summary. Or more simply, a list of Requirements for the badge.

Before starting, I’ll say that this leverages some really interesting work I’ve been doing recently, helping develop a taxonomy and framework for the Inter-American Development Bank’s CredencialesBID initiative, who started badging in 2018 and have racked up a ton of experience in a relatively short time. I see them as innovators in the space. But also other clients before and since, both big and small. The result has been the development of generic “meta framework” that we provide to clients, along with badge content templates, whose scaffolded prompts match the requirements of the different badges in the taxonomy. This framework and taxonomy are flexible and adjustable (and evolving), but are also robust and coherent and most importantly, are based on experience and emergent practice, NOT pre-conceived policy. The more formal badge structures can be adapted for PLAR/RPL evaluation for credit, but the primary purpose is to clearly communicate the claims that badges are making and how those claims are supported – for ANY audience.

The taxonomy was developed for clients building badge systems on CanCred.ca and Open Badge Factory platforms, leveraging affordances like badge sharing, but the principles are actually pretty universal. I recently mapped a version of the framework for a UN agency currently using a competing platform.

Mapping a badge taxonomy

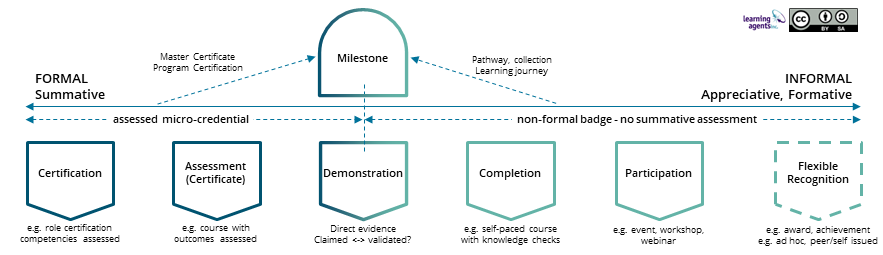

First, let’s review the top layer of the key diagram I shared 2 posts ago:

NB1: A Demonstration badge can be an evidence package or a live demo. It may manifest as an assessed micro-credential, or it may support self-claimed skills and achievements, or maybe a combination of the two, hence the dotted line, splitting it down the middle.

NB2: I made one revision from the previous version of this: digital badge has become “non-formal badge” because, in my opinion, micro-credentials are really just “formal badges”. I do understand the verbal shorthand of micro-credentials vs. digital badges up here in Canada, but I think it’s a bit binary and can lead to confusion,as this post may start to demonstrate.

Notice the examples provided underneath the different types of badges, such as “course with outcomes assessed” for the Assessment Certificate, a very popular type of micro-credential. Then there is the Completion badge, also very popular, but not really a micro-credential, if there’s no summative assessment. Unlike an Assessment badge, that you should be able to “fail” (otherwise it’s not really summative), you could probably bang away at the knowledge check questions in the Completion badge until you got them. If you evaluated specific examples of these different types of badges in the wild, you would expect to see some indication of the difference in the content of the badge, right? Well, not always, as the current leading candidate for the “Badge Hall of Shame” demonstrated in my last blog post.

Critical Information Summary: an evaluation tool

Enter the badge manifest, or “Critical Information Summary”, essentially a checklist of content elements that badge viewers should expect to see, so they can properly evaluate the badge. A “recipe for recognition”, if you will. The first I heard this term was in Oliver (2019), and the concept has since been adopted by frameworks such as Australia’s National Microcredentials Framework (“Critical information requirements and minimum standards”) and EU’s Recommendation on a European approach to micro-credentials for lifelong learning and employability (“European standard elements”). McGreal and Olcott (2022) have also developed a useful list, based on secondary research (Micro-credentials reference framework for university leaders). It’s worth mentioning eCampusOntario’s Micro-credential Principles and Framework (2020) as an early formative influence here in Canada, but it’s a bit more high level – somewhere between a framework and a checklist.

Problem is, these are all for micro-credentials. Can you say monoculture? What about less formal types of recognition, that might be just as useful and valuable, if not more so than an assessed course credential? The various webinars and MOOCs that didn’t require a summative assessment – useless? Not really. Even better, that employer testimonial, or that evidence package you assembled to support your self-claimed badge, or those endorsements you got from your co-workers? Or the Guru badge you were crowned with in your community of practice? Maybe that award you won in the hackfest at the makerspace? Or the heartfelt “Thank you” badge from the community association that actually tells the story of how you made a difference last summer? These are all different types of recognition, calling for different types of badges with different requirements to make them fit for their respective purposes. The authentic power of badged recognition often comes out of the specific context: the story that the badge can tell about you as an individual that other people might want to know and work with.

Building a content menu for a comprehensive taxonomy

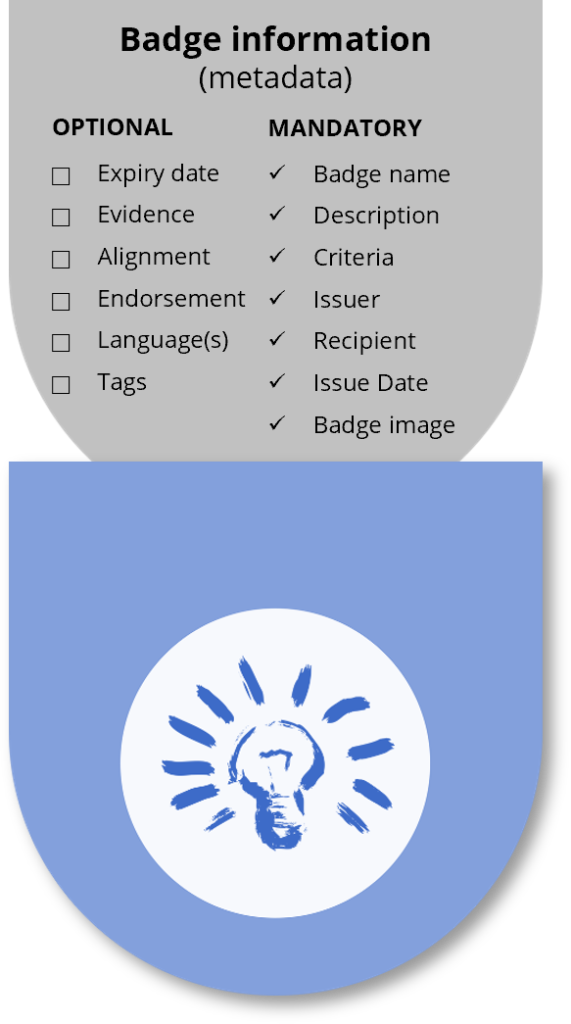

The Open Badge standard itself, with its mandatory and optional fields, makes a great starting point for differentiating badges. Fields such as Description, Evidence, Alignment and Endorsement can meaningfully describe what’s being recognized, if you take care in completing them.

But the most important field for many (including me) is Criteria, what I like to call the beating heart of the badge – what does it take to earn this badge? Problem is, Criteria is a blank canvas, because Open Badges are flexible. Blessing and curse – it has led to some pretty poorly written criteria (did I mention the Badge Hall of Shame?)

Guidance for Criteria

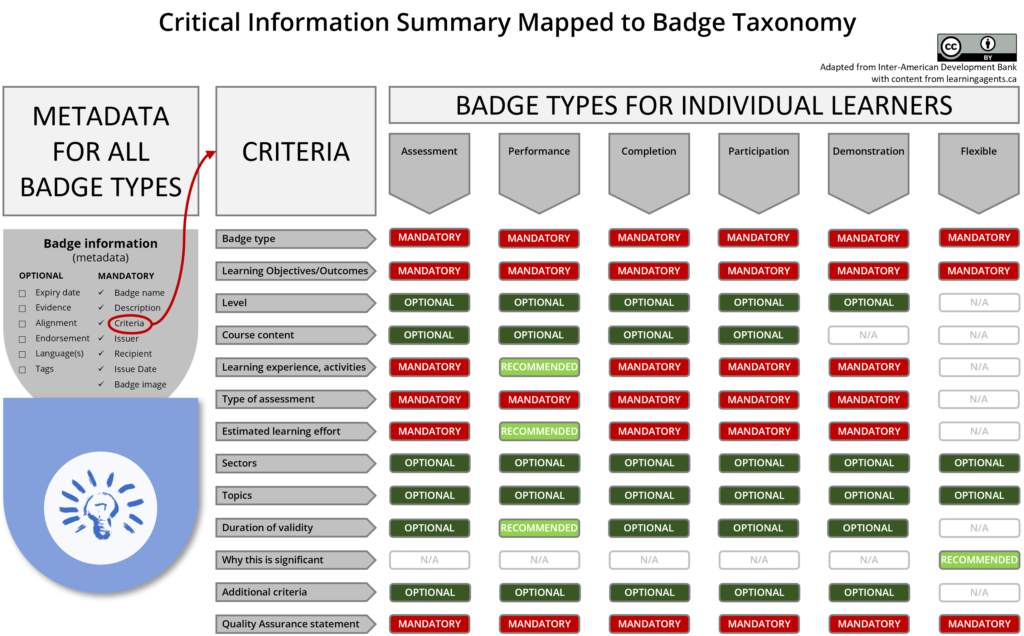

To help manage quality AND fitness for purpose, we have developed custom Criteria content models for the different types of badges. Here is a generic version of a Critical Information Summary menu that I helped develop for the Inter-American Development Bank’s CredencialesBID initiative. The Criteria field breaks out into a series of options, recommendations and mandatory requirements:

(NB: I removed some really interesting community of practice badges to reduce complexity, but I also included a Demonstration badge, the newest element in my evolving taxonomy, not part of the CredencialesBID taxonomy.)

The Open Badge fields and the Critical Information Summary menu for Criteria form the basis of the content templates for each badge, each with its own text scaffolding. These templates can be packaged as text Requirements documents for offline preparation or as badge “blanks” that can be copied into client badge environments to guide creation and can be further adapted to fit local needs.

Since they introduced their badge Requirements documents, CredencialesBID has reported a significant reduction in costly revisions and a much smoother badge production process from the enhanced clarity. So it’s worth doing!

In later posts, I’ll provide a bit more detail about the text scaffolding and I’ll also revisit the design of badge images – not just as branding opportunities, but as signposts for the meaning of your badges – making learning, skills and achievements more visible.

Pingback: 5 reasons why microcredentials are not Open Badges in name, spirit, or ethos | Open Thinkering

Hi Don. This was very useful for me but I have what might be a “beginners” question – my apologies in advance if I have missed something that is widely known.

One of the misgivings that has been expressed to me in regards to micro-credentials is the challenge of an employer (or an educational institution) receiving an application for a job or course that contains a large number of digital credentials. Is anyone working on digital tools that will ease their burden of validating and evaluating these credentials. It seems to me that the taxonomy you describe will result in more useful information being stored within the badge, but we will still need tools to extract and process this information.

Thanks for any pointers you can give me on this.

Brian Mulligan

Hi Brian,

Thanks for your interest and the great question. My somewhat scattered thoughts here may be the start of a new blog post.

First, employer awareness is a huge issue – there needs to be employer demand for badges to be useful as employability tools – the “pull” (some argue that is NOT the only purpose of badges, more micro-credentials, but leave that for now.)

Assuming employer interest, how to make sense of all those badges, right?

There are a number of solutions in play:

– AI/Natural Language Processing

– HR Open is the current version of the HR-XML standard, that’s supposed to be better than just chewing up resumes with OCR and text processing for Applicant Tracking Systems. They are introducing a new standard in May at their annual meeting. It is designed to be able to read Open Badges as Verifiable Credentials (VCs), including structured “linked data” that can be mapped to other structured data like skills frameworks: “Learning and Employment Records – Resume Standard” (LER-RS).

– Meanwhile, 1Edtech has merged the working groups for Open Badges and the Comprehensive Learning Record (CLR), which will both become compliant with the VC standards like driver’s licences. And there’s crossover to HR Open

I may not have all the above completely straight, but the point is that standards are being set for machine readability and discoverability of recruitment data that will increasingly be supplemented with AI/ML/NLP.

I have mixed feelings about all this; we’re more than the aggregated skills in our LERs. I understand the scalability issue, but I still think human connections are more important than machine readable skills. (“They know SAP but what are they like to work with?”). Plus, I feel a lot of the use cases feature F500 emplyers and most of the economy is driven by the SMEs, who often still hire by guess and by gosh.

I guess it’s a combination of things: summative-type badges to get you past the early filters, then more holistic badges later on in the process.. plus real interviews! Badges won’t get you the job.. see 2 blog posts back!

Plus, I’d like to see lots more about the entire talent pipeline, rather than just the recruitment section of it. Which is another way of saying there’s room for more community and human connection in all this – not just a single candidate being evaluated by machine-readable criteria.

Thanks, Don. I agree that this is relevant to the entire talent pipeline but I just use the recruitment challenge to illustrate the problem of employers/institutions trying to make sense of badges submitted to them. This issue is emerging because some people are suggesting that micro-credentials (MCs) are a threat to degrees. Just like degrees, employers know that these are only partial indicators of competence or suitability. However, it is unlikely that they will put value on collections of MCs if they cannot evaluate them as easily as degrees (which is not necessarily that easy).

I feel we have another chicken and egg problem here. Employers, and thus learners, will place low value on MCs if it is difficult to verify and evaluate, and this will continue to be difficult until we have the digital tools we need. This of course will slow down the development of MCs.

By the way, I don’t think these tools need to be fully automated, but instead would assist a human with the grunt work. I imagine a system where the reviewer would have a dashboard for a candidate with suggested scores for a candidate against a set of job requirements and where they could click on any score and see the evidence that might include a digital credential with some information on the reputation or reliability of the issuing organisation (the score could, of course, be changed by the reviewer).

To be honest, HR is not my field, but I do feel we need HR professionals to say “this will work for us” – and to get them to work they need the right tools. I might also say that Microcredentials will not succeed without Digital Credentials and that the evaluation tools are just as important (if not moreso) than issuing tools.

Hi Brian,

Sure, that seems reasonable to me. And HR Open and Credential Engine are feeding into that, with linked data tools to provide some structured guidance for the AI. I also think Endorsement will become more scalable and contextual at the same time.

What about after you get hired.. workforce development? Extending that talent pipeline.. too much focus on recruitment and school to WoW transition, IMO.

Hi Brian,

I am curious how an employer would measure the value of a degree in the success of a new hire. Would they have a regimen of assessments and performance evaluations that they can relate to the content of the degree? If so, they should be able to relate those to the metadata of Open Badges, Verifiable Credentials, LER-RS data, or even narrative text claims of skill/competency in a plain old-fashioned resume. I think that AI will need supervised learning in order to produce reliable results in matching claims that don’t include an ID # (for instance, Employer wants Candidate with Skill #123456 defined at example.com and Candidate submits application including Badge with Skill #123456 as part of the Badge Criteria — this is a simple match, but matching narratives rather than numbers would be much more challenging). I don’t think that the current AI used in Applicant Tracking Systems does a good job at all and there is no visibility or explainability in how the AI was trained.

Perhaps, a trusted third party could perform quality assessment of the credentials. Ideally, there would be statistics that measured success of credential holders (badges, degrees) in the workforce, but there is so much that goes into success that seems like a very difficult challenge to turn into a measurable process.

Pingback: The old ‘chicken and egg’ problem about microcredentials kind of misses the point | Open Thinkering